Speech Perception in Audiovisual Communication

Hans Rutger Bosker heads the SPEAC lab at the Donders Institute of Radboud University, Nijmegen, The Netherlands.

Roos, Matteo, and Hans Rutger giving a demo at the Speech Science Festival, Rotterdam, 2025

Latest news

click here for all news items

Demos

Try out these audiovisual illusions, speech tricks, and other hocus-pocus…

Manual McGurk effect

How hands help us hear…

Conjuring up words that were never spoken

"That’s one small step for (a?) man…"

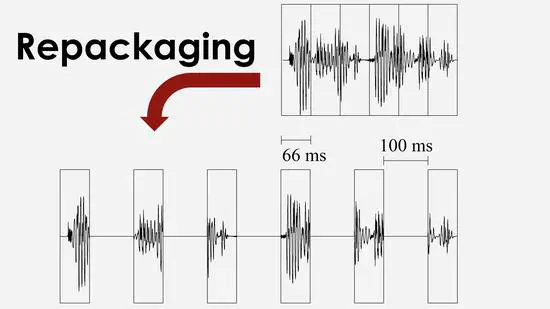

Repackaging speech

Making unintelligible speech intelligible again…

Cocktail party listening

Trying to attend one talker, while ignoring others…

Look and listen

How your eyes betray what you think you hear…

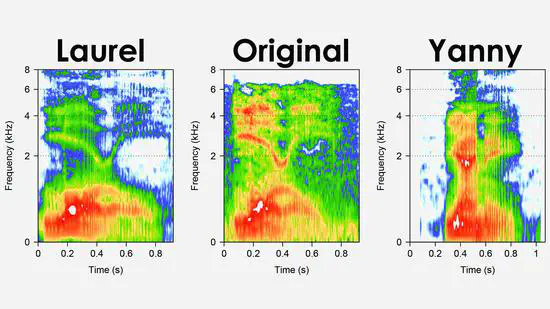

Laurel or Yanny?

Making you hear Yanny when previously you heard Laurel…

Lombard speech

How speaking up changes your voice…